Last Updated on August 1, 2018 by Laura Coronel

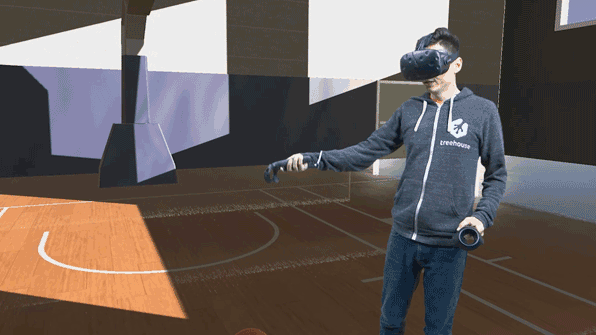

VR performance is the bedrock of a great room-scale VR experience. Headsets like HTC Vive and Oculus Rift both run at a 90Hz refresh rate (in their current incarnations), meaning that the framerate of your Unity project needs to stay at 90fps (frames-per-second) or above. Anything less, and the images will start to “judder” leading to an immersion breaking experience, or worse, simulator sickness.

It can be very challenging to balance a 90fps+ performance target with the desire to splurge on high fidelity 3D models, textures, and special visual effects. While graphics take up the bulk of performance in VR, other areas like physics, audio, and scripts can break performance as well. Many VR performance problems manifest as a project closer to completion, but a few simple guidelines at the start can put a new Unity project on a good trajectory. Here’s how I approach new VR projects in Unity.

Contents

Use Forward Rendering and MSAA

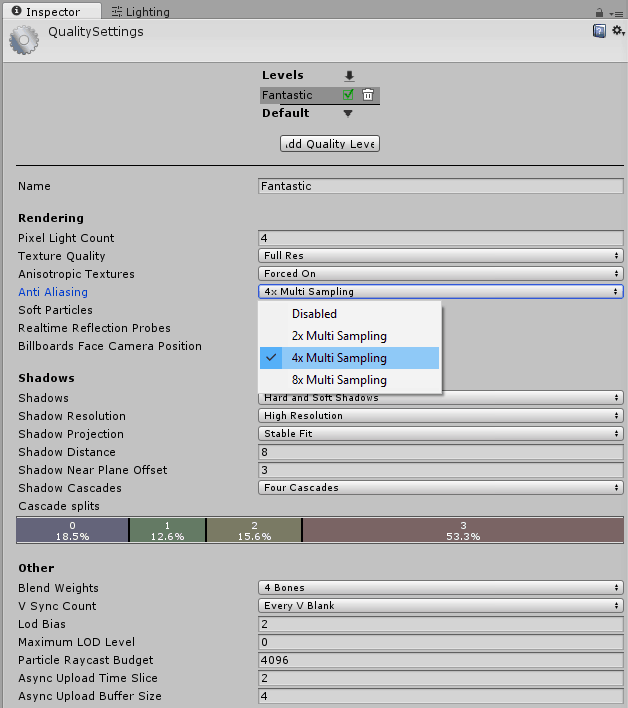

Don’t change the default rendering path in Unity. It’s set to forward already, and that’s what you want. Set Anti-Aliasing to about 4x in the Quality Settings.

For long-time VR developers, this might seem like an obvious piece of advice. Sadly, I didn’t know this when I first started and built a very large project based on deferred rendering only to switch to forward late in the process. That hurt.

A rendering path is a technique for drawing images to a digital display. Unity supports a few rendering paths, but the most common are the forward path and the deferred path. The deferred path renders in multiple passes that decouple geometry and lighting information. Lighting and shading is “deferred” until the end, which is where the name comes from. This means that deferred rendering supports a large number of lights, because the cost of a light generally only comes from the number of pixels on the screen it’s affecting.

That sounds like a pretty great advantage, but the huge drawback to deferred rendering is that it can only accomplish anti-aliasing through a screenspace shader (called an image effect in Unity). This approach comes at a considerable cost to performance and the results aren’t always very good. Anti-aliasing smooths out the jagged pixel edges (aliasing) of objects in 3D, and with the relatively low resolutions of VR headsets, this helps quite a lot.

The good news is that forward rendering enables MSAA (multi-sampling anti-aliasing), a great “AA” technique, at a very low cost to performance. The even better news is that forward rendering is enabled by default in Unity’s Graphics settings. All you need to do is make sure that anti-aliasing is running in the Quality Settings.

Generally, I like to destroy all but one of the quality levels to make sure I’m targeting the experience I’m after. I set MSAA to around 4x, because I feel there’s a noticeable difference going from 2x to 4x, but not much benefit beyond that. While MSAA is nearly “free”, there’s still a performance cost, so I rarely go to 8x. Keep in mind, this could change as headsets become higher resolution. 8x and beyond may become the new normal over time.

Set the Stereo Rendering Method to Single Pass

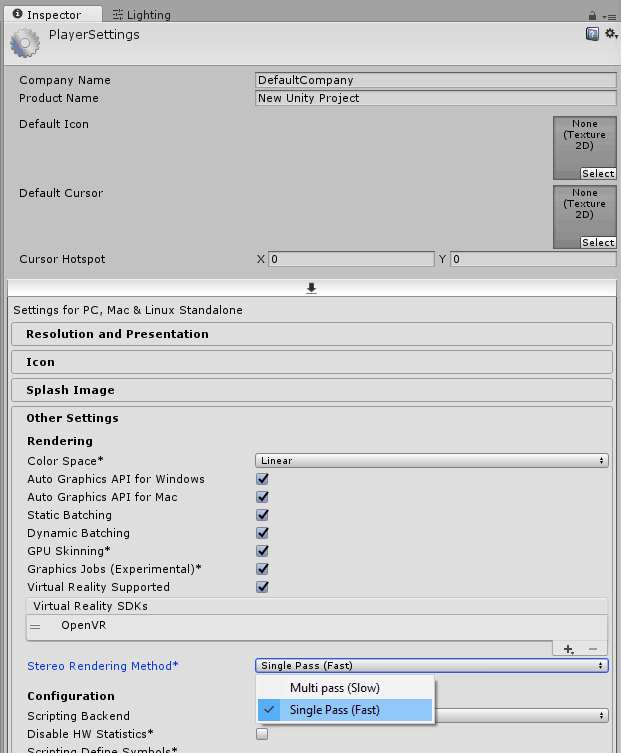

In the Player Settings, set the Stereo Rendering Method to Single Pass.

Images in a VR headset are displayed from two different perspectives; one for each eye. In the last few years of VR development, that meant including two virtual cameras in a Unity project, and rendering the scene twice. Ouch! Then on top of that, the performance cost of any image effects would be multiplied by 2x, because they would have to be applied to each eye’s image. Double ouch!

Unity has implemented a brilliant solution to this problem that they call single-pass stereo rendering. It combines the two images into a single rendering pass, rather than rendering each one individually, and then splits the image appropriately for each lens in the VR headset. Single pass rendering can be enabled in the Player Settings.

The only downside to this is that not all image effects and shaders support single pass stereo rendering just yet. Unity supports this out of the box, but if you’re purchasing assets from the Unity Asset Store, it’s worth contacting the developer in the forums or by email to determine whether or not single pass is supported.

Art Direct for Performance

Design games with performance in mind, rather than trying to optimize at the end.

So, you’re making a forward rendered game using MSAA 4x and single pass stereo. Great! In my opinion, those are firm requirements for contemporary VR development. Now, what game should you make?

Well, I’d love to play a VR game where I’m driving in a jeep through a lush rain forest in pouring rain, being chased down by a T-Rex. I want to see every leaf on every tree, every raindrop, and every scale (or feather) on the dinosaur, all simulated in real-time. The problem is that this idea would be impossible with present technology. Even “offline” rendering that’s used for Hollywood films struggle to achieve this level of fidelity.

Instead, it’s much better to think about all your ideas, and try to find the ones that are going to be reasonable to render and simulate in real-time at 90fps. That’s going to mean throwing out some ideas that sound pretty fun, or iterating on ideas to make them fit the performance target. However, another way to frame the problem is by looking at it as creative art direction. Given the limitations that may not be placed on you in other mediums or in non-VR games, what ideas fit the constraints? Would a stylized look render faster and still fit the idea? Could you create an idea that only has a few detailed items on screen at once, rather than a huge scene with lots of detailed items?

There’s no simple answers here; only hard decisions and lots of thinking. But thinking is cheap, and performance optimization is really difficult. It’s better to come up with an idea at the start that will perform well rather than trying to force a complicated idea into a performance box during crunch time.

Performance is a deep topic with a lot of nuance, and there’s no way to create a definitive performance checklist that will cover every situation. As you develop your project further, I recommend continuously profiling (using tools like Unity’s Profiler) at every step. If you have any additional performance tips you’d like to share, please do so in the comments!

hello,

A great article! i don’t suppose you could offer advice from your experience about realtime lighting and shadows within unity, steam and Vive. i am on a journey using these 3 elements to develop VR training. Everything looks great in preview mode, but on publish to exe, i lose all shadows, even using the simple steam examples. Cannot figure why, thought perhaps you came across this yourself, or you know of a course that steps through publishing to vive vr? any guidance will be appreciated. thanks & regards.

Thanks for those tips,I don’t know whether those tips included Oculus Rift or not, but mesh edges were flickering in Oculus screen even in 8x MSAA. Tried post-process AA also but it didn’t help me. What can I do to make mesh edges sharper and also I increased RenderScale value to 1.2 from 1.

Try increasing the Scale in Lightmap parameter on mesh gameobject ex:5 and decrease the lightmap resolution to around 10 in Lighting settings

Hi, i am having the flickering issue with Vive and Windows Mixed reality VR devices.

I have tried all the above but still no luck..

Any suggestions ?

Thanks

Try removing the normal map, sometimes normal maps and specular materials cause this artifact, I havent figured any other way to solve it but reducing the normal map intensity.