Last Updated on May 13, 2026 by Laura Coronel

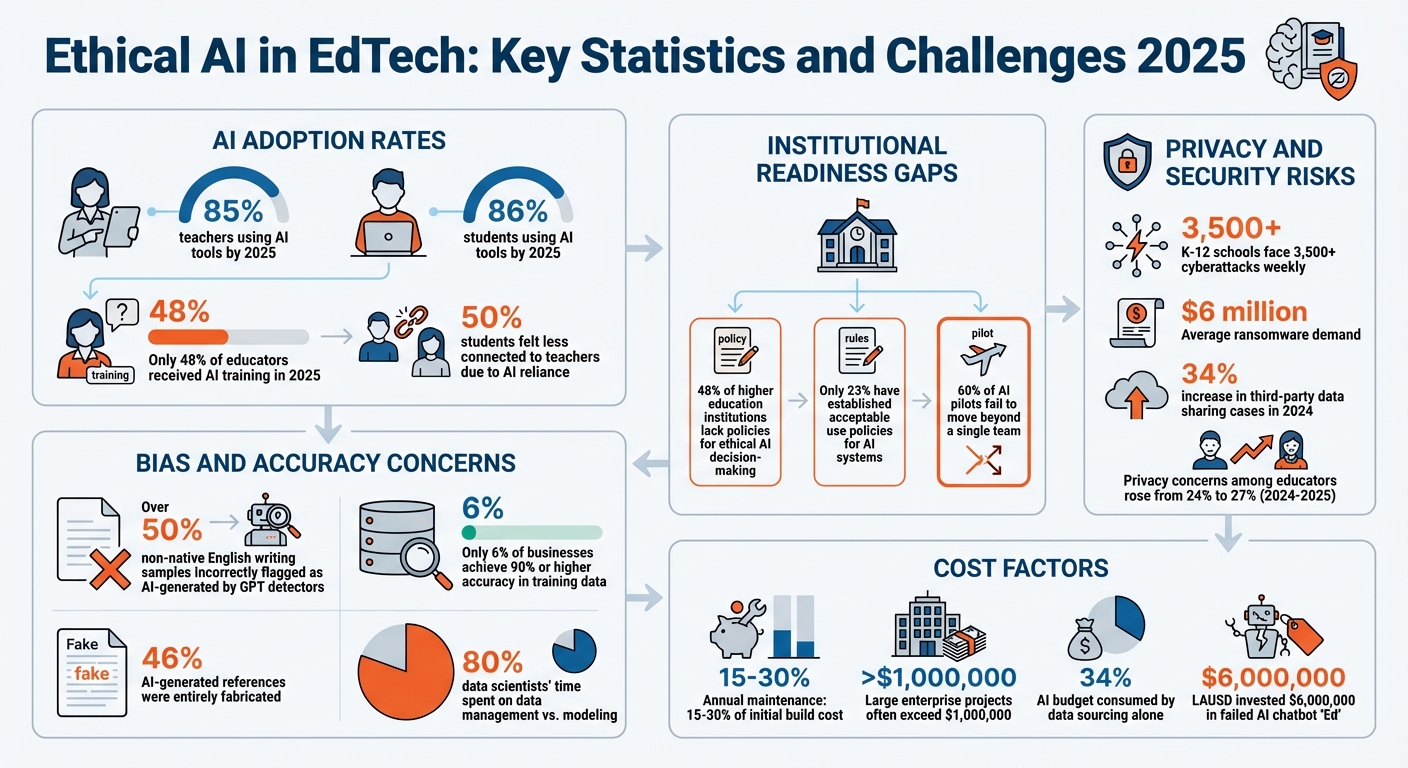

Ethical AI in education is about expanding AI tools like personalized tutoring and automated assessments while ensuring fairness, privacy, and accountability. With 85% of teachers and 86% of students in the U.S. using AI tools by 2025, concerns like bias, privacy risks, and accountability have grown. For example, only 48% of educators received AI training in 2025, and 50% of students felt less connected to teachers due to AI reliance.

Key takeaways:

- Bias risks: AI can amplify inequalities, like mislabeling non-native English writing as AI-generated.

- Privacy concerns: Schools face rising cyberattacks, and data misuse risks are high.

- Accountability: Human oversight is crucial to prevent over-reliance on AI.

Solutions include regular bias audits, diverse training data, clear accountability systems, and compliance with laws like FERPA and GDPR. Ethical AI requires balancing scalability with trust, transparency, and human connection.

Ethical AI in EdTech: Key Statistics and Challenges 2025

Contents

- 1 Ethical AI in Education: Building transparent frameworks for the future

- 2 Core Ethical Principles for AI-Driven Scalability

- 3 Key Challenges in Scaling AI for Personalized Learning

- 4 Bias Amplification at Scale

- 5 Real-Time Processing and Scalability Risks

- 6 Strategies for Bias Detection and Mitigation in EdTech AI

- 7 Privacy and Data Governance in Large-Scale EdTech AI

- 8 Best Practices for Implementing Ethical AI in EdTech Platforms

- 9 Conclusion

- 10 FAQs

Ethical AI in Education: Building transparent frameworks for the future

Core Ethical Principles for AI-Driven Scalability

Scaling AI in education demands a strong foundation built on fairness, transparency, accountability, and equity. These principles act as safeguards, ensuring AI systems enhance learning opportunities rather than deepening existing disparities. When scaling from 1,000 to 100,000 students, issues like bias and transparency grow exponentially, potentially affecting academic outcomes. This section explores how bias arises and how it can be addressed through systematic efforts.

The urgency is clear: nearly 48% of higher education institutions lack policies for ethical AI decision-making, and only 23% have established acceptable use policies for AI systems. Without these ethical guidelines, algorithmic decisions could shape critical areas like course assignments and scholarship eligibility in ways that are neither fair nor transparent.

Let’s dive into how the principles of fairness and accountability can be translated into practical actions.

Fairness and Inclusion in Scalable Systems

Ensuring fairness in AI systems at scale means tackling bias on several levels: individual, group, and multi-level.

- Individual-level bias happens when similar students are treated differently.

- Group-level bias can lead to systemic disadvantages tied to factors like race or socioeconomic status.

- Multi-level bias emerges when efforts to correct individual bias unintentionally harm group equity.

A standout example comes from a high school in the Midwest, where an ethically guided AI system boosted reading comprehension scores for English language learners by 25%. How? By training the AI on diverse linguistic patterns rather than focusing solely on Standard American English. This approach avoided Representation Bias, where underrepresented groups might otherwise receive less accurate predictions due to inadequate training data.

Another challenge, Mapping Bias, occurs when a universal model designed for one setting – like urban schools – fails to account for differences in technology access or local learning environments. Solutions include creating subgroup-specific models and avoiding proxies like zip codes, which can unintentionally lead to discriminatory outcomes.

"Diversity is not just about checking boxes; it’s about creating a team that can bring different perspectives to the table."

- Dr. Jane Smith, AI Ethics Expert

Technical strategies can also promote fairness. For example, adversarial debiasing during model training helps maintain equity across racial and socioeconomic lines. Applying Universal Design for Learning (UDL) principles – offering multiple ways for students to engage and demonstrate understanding, including multilingual options – has shown success. Regular bias audits using diverse datasets are another essential step to catch and address issues before they affect large numbers of students.

While fairness is a cornerstone, it’s just one part of building ethical AI systems at scale. Accountability is equally critical to ensure these systems remain trustworthy and reliable.

Accountability in AI-Driven Decision-Making

Accountability complements fairness by ensuring that humans – not algorithms – remain responsible for AI outcomes. This is crucial, especially when errors occur. For instance, a study found that 46% of AI-generated references were entirely fabricated. Clear accountability mechanisms are essential to address such issues and prevent a breakdown in trust.

"Accountability establishes a continuous chain of human responsibility across the entire AI project delivery workflow."

- Oleksandra Zhura, Author, Litero

Effective accountability starts with clearly defined roles. Developers are responsible for designing models and testing for bias, administrators oversee implementation, and educators make the final judgments. This “human-in-the-loop” approach ensures AI supports – not replaces – professional decision-making. Additionally, regulatory frameworks like the EU AI Act emphasize human oversight for high-stakes applications.

Practical accountability measures include forming oversight committees that include technical experts, ethicists, and student advocates. These committees monitor AI systems and establish redress mechanisms for challenging AI-driven decisions. Some institutions use “Red Light, Yellow Light, Green Light” systems to clarify when AI is allowed, restricted, or prohibited.

Another key shift in accountability is focusing not just on AI’s outputs but also on its underlying processes. Detailed logs of model architectures, data sources, and decision-making rationales improve system transparency and make it easier to identify responsible parties when errors occur. Without this level of transparency, meaningful accountability becomes impossible.

Key Challenges in Scaling AI for Personalized Learning

Taking AI from a small pilot program to a full-scale system that serves tens of thousands of students isn’t as simple as flipping a switch. This transition brings a host of complex challenges, particularly when it comes to managing data, infrastructure, and maintaining reliability.

One of the biggest hurdles is data fragmentation. In most EdTech environments, student records are scattered across multiple systems like Student Information Systems (SIS) and Learning Management Systems (LMS). This inconsistency makes it difficult for AI models to access the unified datasets they need to perform well. To address data fragmentation and ensure your AI systems can access and integrate information from disparate sources, consider using a comprehensive data integration platform. Integrate.io is a fixed-price, low-code data integration and transformation platform that helps teams connect data across databases, APIs, files, CRMs, ERPs, and data warehouses to power analytics, reporting, and operational workflows—without requiring heavy engineering or ongoing maintenance. Shockingly, data scientists spend about 80% of their time on data management tasks rather than actual modeling. To make matters worse, only 6% of businesses report achieving 90% or higher accuracy in their training data, meaning many AI systems are scaling on shaky foundations.

As user numbers grow, infrastructure bottlenecks also become a real problem. For example, when a system scales from 50,000 to 100,000 users, traditional database architectures often struggle with severe read/write delays, which can disrupt the learning experience. For AI-powered tutoring tools, maintaining under 200 ms latency is critical to ensure smooth, interactive experiences. Anything slower can frustrate students and make the system feel unresponsive. To address this, solutions like global Content Delivery Networks (CDNs), edge computing, and optimized data payloads are essential. Additionally, these systems must be designed to work with older devices and unreliable internet connections, often requiring offline-ready architectures or local inference capabilities.

Real-world examples highlight how difficult scaling can be. In 2023, the Los Angeles Unified School District (LAUSD) poured $6,000,000 into an AI chatbot named “Ed”, designed to personalize communication for its 540,000 students. Despite the hefty investment, the project failed due to privacy issues and the complexity of deploying a standalone AI system without proper cross-team collaboration. This highlights a broader issue: nearly 60% of AI pilots fail to move beyond a single team, leading to breakdowns during full-scale rollouts.

"Without strong plumbing, AI remains a demo."

- EdTech Digest

Another critical challenge is model reliability. Large-scale AI systems sometimes produce hallucinated outputs – like incorrect grading suggestions or nonsensical guidance – which can quickly erode trust among users. On top of that, scaling comes with a hefty price tag. Annual maintenance costs can range from 15% to 30% of the initial build cost, and large enterprise-level projects often exceed $1,000,000. These aren’t just technical issues; they’re ethical concerns too. System failures disproportionately harm the students who rely most on dependable educational tools.

Scaling AI for personalized learning is no small feat. From fragmented data to infrastructure limitations and reliability concerns, every step requires careful planning and robust solutions to ensure these systems can truly meet the needs of all students.

Bias Amplification at Scale

When AI systems expand from serving a few hundred students to hundreds of thousands, even small biases can snowball into much larger problems. This phenomenon, called a “bias feedback loop”, happens when AI learns from historical data that reflects existing societal inequalities and then makes predictions that reinforce those same biases. For instance, if an AI labels students from certain demographics as “likely to struggle”, it might automatically assign them simplified content. Over time, these students generate performance data that aligns with the AI’s original prediction, creating a cycle that limits their opportunities. This issue becomes even more pronounced at scale, as seen in automated systems misidentifying non-native writing styles.

The impact of this bias grows when applied across institutions. GPT detectors, for example, incorrectly flag over 50% of writing samples from non-native English speakers as AI-generated. This could lead to thousands of students facing false accusations of academic dishonesty simply because of their linguistic patterns. Similarly, recommendation engines can trap students in “information cocoons”, where high-performing students consistently receive advanced materials while others are repeatedly funneled toward basic content based on flawed initial assessments. These cycles don’t just affect individual students – they undermine institutional fairness and deepen inequities.

"If an AI system identifies students from certain demographics as more likely to struggle academically, it may reduce the number of challenging assignments or limit access to advanced courses, thereby perpetuating a cycle of reduced opportunities."

- EdTech Digest

One of the underlying issues is automation bias, where people tend to trust algorithmic decisions without question. As AI systems are scaled to serve larger populations, educators may stop critically evaluating AI recommendations, allowing biased outcomes to spread unchecked. This risk is compounded by the fact that many AI models function as “black boxes”, meaning even their creators often can’t explain why a specific decision – like a grade or recommendation – was made. Dr. Punya Mishra, Associate Dean at Arizona State University, points out that AI tools are “as biased as we are because they have been trained on us”, highlighting that these systems mirror human prejudices.

Addressing these challenges requires constant vigilance. With only 6% of AI systems achieving 90% or higher accuracy in training data, ongoing monitoring is essential. Breaking down data by race, language background, and learning needs helps ensure these systems don’t turn discriminatory patterns into institutional norms. This makes it clear why proactive measures, like those discussed in the next section, are so critical.

Real-Time Processing and Scalability Risks

When AI systems need to respond instantly – like providing personalized feedback or flagging suspicious activity during an exam – the technical demands skyrocket. Real-time interactivity requires latency below 200 ms, meaning the system must process requests, analyze data, and deliver responses in less than a fifth of a second. Handling this level of responsiveness for thousands or even millions of users demands a robust infrastructure. This often involves a modular microservices architecture, containerization, and edge deployment, which shifts computing closer to users instead of relying solely on distant data centers. Without these components, systems risk crashing under heavy loads, disrupting critical learning moments. Furthermore, integrating these modern systems with older, existing technologies adds another layer of complexity.

Speed isn’t the only challenge here. A significant portion – about 34% of a typical AI budget – is consumed by data sourcing alone. Scaling these systems to accommodate large user bases often means grappling with legacy systems that weren’t designed with AI in mind, leading to data silos and compatibility headaches. In regions with limited digital infrastructure, such as low- and middle-income countries, unreliable internet and electricity make maintaining real-time AI performance nearly impossible, derailing scalability efforts.

"When AI begins operating at scale, gaps and errors [in data] surface, forcing teams to re-clean, re-label, and redesign data pipelines."

- Grids & Guides

Beyond the technical hurdles, real-time systems also face ethical performance risks, which grow more pronounced as scale increases. For instance, AI models can “hallucinate” – producing confident but entirely false information – especially when speed takes priority over accuracy. These errors don’t just undermine system reliability; they also risk reinforcing biases, as rushed outputs often lack proper verification.

The human impact of scaling real-time AI is equally concerning. In large classes with over 250 students, AI feedback tools can unintentionally stifle individual expression, reducing students’ unique voices. Faculty roles may shift from being mentors to mere monitors. Moreover, half of all students report feeling less connected to their teachers due to heavy reliance on AI in classrooms. This over-reliance on AI for real-time tasks can diminish educators’ ability to engage in the deeper intellectual and moral responsibilities that define teaching. These challenges highlight the trade-offs between prioritizing speed and scale versus preserving meaningful human connections in education.

sbb-itb-8595c7c

Strategies for Bias Detection and Mitigation in EdTech AI

Detecting and addressing bias in AI systems requires deliberate, structured efforts throughout the development process. This ensures that AI tools in education promote fairness and equity rather than perpetuating disparities. Combining thorough algorithmic audits with diverse data practices helps identify and address issues before they affect students.

Algorithmic Audits for Bias Detection

Effective audits rely on input from diverse teams – teachers, IT staff, equity coordinators, special education experts, and even parents and students. These groups can help identify how AI tools impact student outcomes, from automated grading systems to content recommendations, attendance alerts, and chatbot interactions.

One key metric for bias detection is the AUC Gap, which measures the absolute difference in Area Under the Curve scores across student subgroups (e.g., race, gender, or socioeconomic status). For example, researchers in November 2020 used the Eedi dataset from the NeurIPS 2020 Education Challenge to test bias mitigation techniques. By applying Exponentiated Gradient Reduction (EGR) via the Fairlearn library, they reduced the AUC Gap from 0.164 to as low as 0.003–0.052. However, this improvement in fairness came with a drop in overall model performance, from 0.702 to 0.537. This highlights the trade-offs between fairness and accuracy that platforms often face.

"The solution lies in proactive evaluation rather than reactive damage control. With the proper audit approach, AI tools can support equity goals rather than undermine them."

- Fely Garcia Lopez, SchoolAI

When working with vendors, request performance data broken down by student demographics – such as English learners, students with disabilities, or students of color – instead of relying on overall accuracy metrics. Additionally, platforms should offer “teacher override” options, allowing educators to adjust AI outputs based on their professional judgment. Tools like IBM AIF360, FairLearn, and Google’s Fairness Indicator can help automate the detection of statistical parity issues.

While audits play a crucial role, the quality and diversity of training data are equally important in reducing bias.

Building Diverse and Representative Datasets

The composition of training data significantly impacts how AI performs across different student groups. Studies have found an average of 3.3% label errors in ten major benchmark datasets, with some error rates as high as 6%. These errors are further compounded when datasets fail to reflect the diversity of the student population. For instance, while about 50% of U.S. public school students participate in free or reduced-price lunch programs, training data often overrepresents students from affluent districts.

Bias mitigation strategies generally fall into three categories:

- Pre-processing: Adjusts data before training, using methods like reweighing or resampling to balance underrepresented groups.

- In-processing: Adds constraints during model training, such as adversarial learning, to penalize biased predictions.

- Post-processing: Modifies outputs after training, adjusting thresholds to ensure equitable representation across student groups.

Using multiple annotators (ideally 3–5) for each data item and escalating disagreements to expert panels can also improve dataset reliability.

In 2024, John Pasmore and Professor Molefi Kete Asante introduced Latimer.ai, a tool designed to reduce bias by using curated resources like books, oral histories, and local archives from underrepresented communities. This approach shifts away from generic web-scraped data, focusing instead on sources that better reflect diverse student experiences. Before deploying AI tools broadly, testing them with small, intentionally diverse student groups can help identify engagement and achievement disparities across demographics.

Privacy and Data Governance in Large-Scale EdTech AI

As AI continues to expand in education, protecting privacy and managing data effectively are just as important as addressing bias and ensuring accountability. These measures work hand-in-hand with ethical frameworks to safeguard student data on a larger scale.

When AI systems grow, so do privacy risks. One major concern is model training – if not strictly regulated, student data could end up in vendors’ training datasets, exposing sensitive information. For instance, cases of third-party data sharing in education increased by 34% in 2024. Meanwhile, K-12 school districts are now targeted by over 3,500 cyberattacks weekly, with ransomware demands averaging more than $6 million.

Another challenge is the potential for re-identifying anonymized data. AI systems can often cross-reference datasets to piece together information that was meant to be de-identified. This creates tension between AI’s need for large datasets and the principle of collecting the minimum data necessary.

"The utility of AI tools is contingent on their access to large volumes of high-quality data… This requirement raises significant concerns about data security, as data breaches or misuse can lead to privacy violations." – Graham Clay, author of AutomatED

Ethical Data Collection and Usage

Responsible data practices start with data minimization – only collecting what’s strictly needed for the AI’s function. For example, platforms can use unique tokens instead of full student IDs or birthdates to personalize lessons, reducing the risk of re-identification. Conducting a Privacy Impact Assessment (PIA) is another critical step to evaluate how data is collected, stored, and retained.

Choosing enterprise licenses over consumer agreements can also make a big difference. Enterprise licenses often forbid using school data for training AI models, while consumer licenses may allow broader data usage. A strong Data Processing Agreement (DPA) should explicitly prohibit selling data, set clear timelines for breach notifications, and require data deletion when contracts end. On the technical side, safeguards like TLS 1.2 or higher for data in transit, AES-256 for data at rest, and role-based access control (RBAC) can help limit access to only those with a “legitimate educational interest”.

"Privacy protections must be embedded throughout the entire software development lifecycle… Real trust stems from systems that embed privacy protections into their architecture, making privacy violations technically difficult, not just contractually prohibited." – AIJ Thought Leader, The AI Journal

Compliance with Privacy Laws and Standards

Beyond ethical practices, strict compliance with privacy laws is essential. For example, FERPA (Family Educational Rights and Privacy Act) governs how personally identifiable information (PII) in U.S. education records is handled. Schools can share data with AI vendors without explicit consent only if the vendor is considered a “school official” performing institutional duties. Violating FERPA can result in fines ranging from $15,000 to $75,000.

COPPA (Children’s Online Privacy Protection Act) focuses on protecting children under 13. Recent 2025 COPPA amendments now require explicit opt-in parental consent for advertising or sharing data with third parties, replacing the older opt-out model. In Europe, GDPR (General Data Protection Regulation) requires a lawful basis for every data processing activity and mandates breach notifications within 72 hours. Non-compliance can lead to fines of up to €20 million or 4% of annual global turnover, whichever is higher.

At the state level, the regulatory landscape is becoming more complex, with over 128 state student privacy laws in effect as of 2026. States like California (SOPIPA), New York (Ed Law 2-d), and Illinois (SOPPA) impose stricter requirements than federal laws. Meanwhile, the EU AI Act categorizes most educational AI systems as “high-risk”, requiring additional risk management and transparency measures, with fines reaching up to €35 million. Institutions that follow structured compliance checklists can deploy AI in 90-120 days, compared to 12+ months for those that encounter compliance issues mid-project.

| Law/Standard | Jurisdiction | Key Requirements |

|---|---|---|

| FERPA | United States | Protects PII in education records; allows vendor access only as "school officials" |

| GDPR | European Union | Requires a lawful basis for data processing and includes the "right to be forgotten" |

| COPPA | United States | Requires parental consent for data collection from children under 13 |

| SOPIPA | California, US | Bans targeted advertising and non-educational student profiling |

| NY Ed Law 2-d | New York, US | Requires encryption standards and a "Parents’ Bill of Rights" |

"Compliance has moved from nice-to-have to make-or-break. Institutions now weigh compliance alongside features and pricing when choosing vendors." – Dmitry Butalov, Head of EdTech at DataArt

Schools with proactive compliance programs often reduce penalties by about 25%, showing how privacy governance can also deliver financial benefits.

Best Practices for Implementing Ethical AI in EdTech Platforms

Scaling ethical AI in education comes with its share of challenges, but following effective practices can help ensure systems remain fair, accountable, and focused on human needs. Leading EdTech platforms establish frameworks built on principles like equity, transparency, privacy, human oversight, and continuous improvement before incorporating AI features. A risk-based evaluation system is often used to assess potential issues, prioritizing efforts based on the likelihood and impact of risks. This approach helps maintain teacher authority and ensures AI serves as a supportive tool rather than a replacement.

The priority is ensuring AI complements, not overrides, professional judgment. Teachers must have the power to adjust AI recommendations based on individual student circumstances or specific needs. For example, in March 2023, an EdTech platform introduced an AI-powered tutor built with a Responsible AI Framework featuring nine guiding principles. To address potential risks, they implemented a Moderation API that flags inappropriate content and alerts an adult linked to the student account, effectively reducing risks by 2025.

Designing with Transparency and Explainability

Trust begins with showing educators and students how AI reaches its decisions. Explainable AI (XAI) methods like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) help clarify predictions in tools like grading and personalized learning systems. Instead of relying on generic AI, platforms should define clear guidelines and provide sample responses, with input from educators, to guide AI behavior.

Using Retrieval-Augmented Generation (RAG), AI tutors can draw exclusively from verified, high-quality curriculum data, reducing the risk of delivering inaccurate or biased information. Providing detailed documentation of model designs, data sources, and decision-making processes ensures accessibility for both teachers and students. A strong example of this is Quill.org, which, as of December 2025, has developed over 300 AI models to support literacy. Their rigorous process includes three rounds of testing by a 600-member Teacher Advisory Council, along with manual reviews of over 100,000 student responses annually to fine-tune AI accuracy.

"AI is malleable… carefully annotated datasets and robust evaluation infrastructure make it possible to mold AI for effective, equitable learning." – Quill.org

Treehouse takes a “concepts-first” approach to AI, focusing on guided learning paths that help students develop adaptable skills as AI tools evolve. Instead of random experimentation, their structured system introduces AI tools progressively, ensuring students grasp foundational concepts like data usage and model behavior before moving on.

"The real challenge isn’t learning AI tools. It’s learning them in the right order, for the right reasons." – Treehouse

Using Community and Feedback for Ethical AI

While technical transparency is crucial, involving the community ensures AI systems address real educational needs. Engaging students, educators, parents, and other stakeholders in policy development helps create AI guidelines that reflect diverse perspectives. This is especially important since 75% of educational staff report feeling unheard on critical issues, and just 8% strongly agree that their organization acts on their feedback.

Regular forums, surveys, and discussions can capture user insights that go beyond raw data. For instance, in 2024, the DeKalb County School District used ThoughtExchange’s AI-powered platform to develop its “MIRACLES Framework.” Led by Dr. Yolanda Williamson, the district gathered input from a wide range of community members, improving efficiency in addressing feedback and shaping eight strategic focus areas.

Ongoing monitoring is also essential. Tracking incident logs, user feedback, and data drift helps detect unintended consequences and refine oversight processes. Cross-functional steering groups, including members from Product, Data, and User Research teams, can evaluate new features against ethical standards.

Treehouse further exemplifies this with its supportive community and student forums, which create natural feedback loops. Combining structured learning with community input ensures AI tools address actual educational needs, not just technical ambitions. As SchoolAI puts it:

"The goal is better student outcomes, not perfect technology." – SchoolAI

Conclusion

Scaling AI in education demands that ethical principles guide every technical decision. As mentioned earlier, the AI education market is experiencing rapid growth. Without careful safeguards, this expansion risks amplifying biases, eroding trust, and weakening the human connections that are essential for meaningful learning experiences.

One major issue is the lack of formal ethical training, which remains a significant gap despite the widespread adoption of AI tools. This underscores the importance of ongoing efforts like bias audits, continuous education, and regular feedback to ensure AI aligns with the needs of classrooms.

When implemented thoughtfully, AI can act as a supportive partner rather than a substitute for educators. A great example is Newark Public Schools, which expanded the use of Khan Academy‘s Khanmigo across 66 schools, reaching around 29,000 students. By combining AI tutoring with teacher training and data dashboards, the district saw math score gains triple. This case highlights how ethical and strategic implementation can make AI a tool for scalable, impactful learning.

"AI success requires less magic and more boring execution – clean data pipelines, robust infrastructure, cross-team ownership, and clear metrics."

- Grids & Guides

This quote serves as a reminder that success is built on practical foundations like clean data, strong infrastructure, and collaborative teamwork.

Ethical scalability isn’t a one-time effort – it’s a continuous process. For example, privacy concerns among educators increased from 24% to 27% between 2024 and 2025. This rise highlights the need for ongoing transparency and governance to maintain trust.

"I feel heartened that more and more of us think about the ethical implications of today’s most exciting innovations."

- Timnit Gebru, Executive Director of The Distributed AI Research Institute

These words reflect growing awareness about the importance of ethical considerations in shaping the future of AI in education.

FAQs

How can schools prove an AI tool is fair for all student groups?

Schools can take steps to promote fairness in AI by thoroughly assessing systems for bias before they’re put into use. This means identifying and addressing disparities that might impact groups based on race, gender, socioeconomic background, or learning abilities. Part of this process involves carefully reviewing the training data to spot and mitigate historical biases and testing how the AI performs across a range of diverse groups.

To maintain equity, schools should conduct regular audits of these systems and ensure transparency in how they operate. Engaging with key stakeholders – like students, teachers, and parents – can also provide valuable perspectives and help ensure the AI supports fair outcomes for everyone involved.

What student data should never be used to train or run EdTech AI?

Certain types of student information should always remain private to protect their identity and maintain ethical standards. This includes personally identifiable information (PII) such as:

- Names, addresses, and birthdates

- Social Security numbers

- Medical records and disability details

- Individualized education plans (IEPs)

- Disciplinary records

- Family income

Additionally, any data collected without proper consent or obtained from insecure or compromised sources should never be used. Respecting these boundaries is essential for safeguarding privacy and adhering to responsible AI practices.

Who is responsible when an AI tutor or grader gets it wrong?

Responsibility for mistakes made by AI tutors or graders falls on the human stakeholders who create, implement, and manage these systems. This includes educators, administrators, and developers, who must prioritize accuracy, fairness, and transparency. Ensuring ethical use of AI involves consistent human oversight, routine evaluations, and clear processes for addressing errors. Accountability means not only fixing mistakes but also regularly assessing the system’s fairness and fostering trust in AI-powered educational tools.